Key Takeaways

Playbooks structure DeFi growth decisions into repeatable sequences based on context. Liquidity, activation, or retention constraints determine which sequence applies.

DeFi growth success depends on diagnosing the real bottleneck first. Misreading activation, liquidity, or retention leads to wasted incentives and broken funnels.

Teams must test each playbook step carefully against their own baseline metrics before scaling or committing resources.

What you will get: three archetypal growth playbooks drawn from real DeFi protocol patterns, an honest account of what worked and what failed in each, the common patterns that recur across successful protocols, the mistakes that recur across failed growth attempts, and a framework for adapting any playbook to your own protocol stage.

Why Playbooks Matter More Than Theory

Growth theory in DeFi is well-developed. There are frameworks for activation, retention, loops, and lifecycle management. Most DeFi teams can describe these concepts accurately. Few can execute against them consistently. The gap is not knowledge. It is pattern recognition: knowing which move to make, in which order, given where the protocol actually is.

Playbooks close that gap. A playbook is not a prescription to follow blindly. It is a documented sequence of decisions made by a protocol in a specific context, including what was tried, what worked, what failed, and why. The value is not in copying the decisions. It is in understanding the reasoning behind them well enough to adapt them to a different context.

The three playbooks in this article are drawn from recurring patterns observed across DeFi protocol launches and growth attempts. No specific protocols are named because the patterns are composite, not singular. Each one represents a recognisable archetype: the protocol that grew primarily through liquidity depth, the one that fixed activation and unlocked compounding user growth, and the one that built retention mechanics into the product before scaling acquisition. For the theoretical framework each playbook sits within, the onchain growth guide covers the complete model.

Playbook 1: Liquidity-Driven Growth Loop

This pattern recurs most frequently in DEXs and lending protocols where liquidity depth is the primary product quality signal. The protocol's competitive position is determined by how much capital it holds relative to peers, because deeper liquidity means tighter spreads or better borrow rates, which means a better product for every user.

The Starting Condition

The protocol launches with moderate liquidity, sufficient to support small transactions but not competitive for larger ones. Acquisition brings in users, but conversion to active traders is low because slippage or rates compare unfavourably to established alternatives. The team recognises that product quality is gating growth: more users will not help until the product is better, and the product will not get better without more capital.

The Sequence of Moves

The first move is targeted liquidity seeding rather than broad incentive programmes. Instead of distributing rewards across all pools, the team concentrates capital into the two or three pairs or markets where genuine organic demand already exists. The goal is to create competitive depth in a narrow set of areas rather than mediocre depth everywhere.

The second move is measuring whether the liquidity improvement is actually changing user behaviour. This is the step most teams skip. Deeper liquidity is only valuable if it translates into better execution, and better execution only compounds growth if users notice and return. Onchain attribution makes it possible to connect the liquidity change to measurable shifts in transaction completion rates and repeat usage, separating the effect of the capital from background market conditions.

The third move is using the volume generated by improved execution to build the case for organic LP participation. LPs who are attracted to fee income from genuine trading activity are structurally different from LPs who are attracted to token emissions. The protocol uses the volume data to make the fee income case visibly, surfacing it in the UI so prospective LPs can evaluate the return without relying on token reward projections.

The fourth move is extending into integrations once competitive depth exists in the core markets. Integration partners route users to liquidity sources that offer the best execution. A protocol that has achieved genuine depth in its core markets becomes the preferred routing destination, bringing in transaction volume from partner protocols without direct acquisition spend.

What Worked

Concentrating liquidity in high-demand pairs before expanding. Depth in a narrow set of markets produced competitive execution quality faster than spreading capital thinly across many pools.

Making fee income visible to prospective LPs. Showing historical fee generation from real trading volume attracted LPs motivated by genuine yield rather than token rewards, which proved more durable when incentives were adjusted.

Using volume data to qualify integration partners. Approaching integration conversations with transaction volume and execution quality data, rather than TVL alone, resulted in integrations that routed genuine users rather than adding impressions without transactions.

What Failed

Broad incentive distribution before competitive depth existed. Early attempts to grow TVL across all pools simultaneously produced shallow liquidity everywhere, which did not change execution quality for any user and did not attract the volume needed to sustain LP interest.

Measuring TVL rather than volume-to-TVL ratio. TVL growth that was not matched by proportional volume growth was an early warning sign that capital was parked rather than productive. The team initially celebrated TVL milestones that did not reflect genuine protocol usage.

Transferable principle: Liquidity-driven growth requires competitive depth before acquisition. Bringing users to mediocre liquidity produces low activation and no loop. Concentrate capital first, then expand.

Playbook 2: Activation-Focused Protocol

This pattern recurs in protocols that have genuine product-market fit signals: retained users exist, repeat transactions occur, but most new wallets never complete a first transaction. The product works for users who get to it. Most users do not get to it.

The Starting Condition

The protocol has run several acquisition campaigns and generates regular wallet connects. Retention among users who do transact is reasonable: a meaningful cohort returns and engages repeatedly. But first-transaction rates are low. The majority of connected wallets never complete a transaction. Growth is constrained not by the product's ability to retain users but by its ability to convert new arrivals into first-time users.

The diagnosis is confirmed by funnel data: connects are high, initiation rates are low, and the gap between connect and initiate is where most wallets are lost. This is a pure activation problem. The onchain activation guide covers the full funnel and the friction points that cause this specific pattern.

The Sequence of Moves

The first move is pausing acquisition spend. Adding more wallets to a funnel that loses most of them at the activation stage accelerates waste, not growth. The team temporarily reduces campaign spend and redirects the resource toward fixing the activation experience.

The second move is identifying where in the activation funnel the drop-off is concentrated. The team measures the rate at each stage: connect to explore, explore to initiate, initiate to confirm, confirm to complete. The largest single drop is at initiation: users land, look at the protocol interface, and leave without starting a transaction. This points to an orientation problem rather than a gas or wallet friction problem.

The third move is redesigning the new-wallet landing experience using progressive disclosure. The team identifies the single most common first transaction among retained users and makes it the default state for new wallet connects. Advanced options are available but not surfaced. A brief, plain-language explanation of what the action does sits above the primary entry point. Gas cost is shown before amount entry.

The fourth move is validating the change on a small cohort before resuming full acquisition spend. The team runs the new landing experience on a defined group of new wallet connects, measures connect-to-transaction rate over a two-week window, and compares against the prior baseline. When the rate improves to a level consistent with the retained user cohort's activation pattern, acquisition spend is resumed.

What Worked

Pausing acquisition before fixing activation. Teams that continue spending on acquisition while activation is broken are funding a leaky bucket. The pause forced the team to solve the right problem before scaling spend.

Single-default landing state for new wallets. Reducing the number of decisions a new wallet faces on arrival materially improved the initiation rate. The key insight was that most new wallets do not need options. They need orientation.

Validating on a small cohort before scaling. Running the new experience on a contained group first meant the team could confirm the improvement was real and not a novelty effect before committing to it as the default experience.

What Failed

Redesigning the homepage before diagnosing the funnel. An early attempt to improve activation involved a full homepage redesign. Funnel analysis later revealed that most drop-off was happening after users had navigated past the homepage to the action interface. The homepage redesign improved nothing measurable.

Adding a product tour instead of simplifying the first action. A guided product tour was built and deployed as an activation fix. Users dismissed it before completing it. The tour added steps to the activation journey rather than removing them. Drop-off at initiation remained unchanged.

Transferable principle: Activation problems require funnel diagnosis before intervention. The fix must target the specific step where users drop off, not the step the team assumes is the problem.

Playbook 3: Retention-First Protocol

This pattern recurs in protocols where activation is working reasonably well but the user base is not compounding. Each acquisition cohort activates and then largely disappears. TVL and active wallet counts are maintained by continuous acquisition spend rather than by a growing base of returning users. The protocol is running a treadmill.

The Starting Condition

The protocol has a functioning acquisition channel and acceptable activation rates. The problem surfaces when cohort analysis is applied to the active user base: the proportion of returning users relative to new users is declining over time. The active user count is holding, but increasingly it is composed of new wallets, not returning ones. The user lifecycle analysis guide describes how to identify this specific pattern before it becomes critical.

The Sequence of Moves

The first move is identifying what the retained minority is doing differently. In every protocol with weak overall retention, a small cohort of users returns consistently. The team segments by behaviour and studies the pattern: what actions did retained users take in their first session that one-time users did not? What positions do they hold? What features do they use?

The second move is identifying the recurring job that keeps retained users coming back. For this protocol, the pattern is clear: retained users hold open positions that require periodic attention. One-time users completed a single transaction with no natural follow-up. The product does not create a recurring job for users who do not hold positions, which is the majority of activations.

The third move is building the retention mechanic directly into the activation flow. Rather than treating retention as a separate post-activation problem, the team redesigns the first-transaction experience to end with an open position where possible. For a lending protocol, this means guiding first-time borrowers toward opening a position rather than just exploring rates. For a DEX, it means offering an LP position as the natural follow-up to a first swap.

The fourth move is adding triggered communication for users who do open positions. Position health alerts, fee accumulation summaries, and rate change notifications create reasons to return that are tied to the user's own financial position. These are not marketing communications. They are product communications that serve a genuine informational need and generate return visits with meaningful onchain actions.

What Worked

Studying the retained minority before building anything. The team spent time understanding why retained users returned before deciding what to build. This prevented the most common retention mistake: building features that seem like retention tools but do not map to the actual reason users return.

Connecting retention to activation rather than treating them separately. Designing the first-transaction experience to end with an open position created the recurring job at the moment of highest intent. Trying to re-engage users who had completed and closed a transaction was much less effective than creating an ongoing connection from the start.

Position-triggered communication over schedule-based communication. Alerts triggered by the user's own position events produced higher re-engagement than scheduled newsletters or promotional communications. The trigger was relevant because it was tied to something the user already cared about.

What Failed

Retention incentives applied to the full user base. An early attempt to improve retention involved applying token rewards to all active wallets. Most recipients farmed the reward and churned. The small genuinely retained cohort was indistinguishable from the farmers in the aggregate metrics, making it impossible to measure whether the incentive was working for the right users.

Newsletter and social re-engagement campaigns. Off-chain re-engagement campaigns produced wallet reconnects but not onchain transactions. Users who returned in response to an email or social post did not activate a position and churned again quickly. Re-engagement that does not end in an onchain action is not retention.

Transferable principle: Retention is a product design problem, not a communications problem. The recurring job must be built into the protocol before re-engagement communications can do anything useful.

What Worked vs What Failed Across All Three Playbooks

The three playbooks share recurring patterns in both their successes and their failures. Recognising these patterns before committing to a growth approach can prevent the most common and expensive mistakes.

Playbook | What Consistently Worked | What Consistently Failed |

Liquidity Loop | Concentrating capital in high-demand areas before expanding. Making fee income from real volume visible to LPs. Using execution quality data in integration conversations. | Broad incentive distribution before depth existed. Measuring TVL as a success signal without checking volume-to-TVL ratio. |

Activation Focus | Pausing acquisition to fix the funnel. Single-default landing states for new wallets. Small-cohort validation before scaling changes. | Redesigning the wrong step without funnel diagnosis. Adding guided tours that increased steps rather than reducing friction. |

Retention First | Studying the retained minority before building. Connecting retention mechanics to the activation flow. Position-triggered communication tied to real user events. | Applying retention incentives to the full user base indiscriminately. Using off-chain re-engagement campaigns that produced reconnects but not transactions. |

Common Growth Patterns Across Successful Protocols

Across the three playbooks and the broader set of DeFi growth patterns they represent, five principles recur in protocols that achieve compounding growth. These are not tactics. They are structural dispositions that shape how growth decisions are made.

Fix the Broken Stage Before Scaling

Every successful pattern involves identifying the specific stage that is limiting growth and fixing it before adding more acquisition input. Protocols that scale acquisition before fixing activation waste spend. Protocols that build retention features before understanding why users churn build the wrong features. The diagnosis comes before the intervention, without exception.

Validate at Small Scale Before Committing

Successful protocols test changes on small, defined cohorts before making them the default experience. This is not caution for its own sake. It is the only way to know whether an observed improvement is real or a novelty effect. The small-cohort validation step is consistently present in growth attempts that compounded and consistently absent in those that produced one-time spikes.

Measure the Baseline, Not the Peak

The diagnostic question in every playbook is the same: what is the baseline doing? Peaks during campaigns and experiments are expected and nearly automatic. The signal is the floor after the intervention ends. Successful protocols track their baselines between campaigns with the same attention they give to campaign-period peaks.

Use Product Changes to Fix Structural Problems

In every case where a structural growth problem was resolved, the fix was a product change, not a campaign. Incentives were used to seed loops and accelerate product-driven growth, but they did not substitute for the product fix. Protocols that tried to use incentives to solve structural activation or retention problems reproduced the same results at higher cost.

Build the Loop Before Funding It

Successful protocols identify the specific causal chain by which usage generates more usage before investing in seeding that loop. The liquidity loop protocol concentrated depth before running broad incentives. The activation protocol validated improved conversion before resuming acquisition spend. The retention protocol built the recurring job before launching re-engagement communications. In each case, the loop mechanism came before the spend that fed it. The growth loops guide covers the loop design process in full.

Mistakes Repeated Across Failed Growth Attempts

The failures in these playbooks are not random. The same mistakes recur across different protocols, different team compositions, and different market conditions. Recognising them is more reliably useful than studying what worked, because what works is often context-specific, while what fails tends to generalise.

Treating campaigns as experiments. A campaign run without a defined hypothesis, a control, and a pre-registered success condition is not an experiment. It is activity. Most DeFi teams run activity and call it a growth programme. The DeFi growth experiments guide covers the distinction in detail.

Running incentives before the product can retain. Incentives accelerate the trend that already exists. If the product cannot retain users, incentives produce more churned users faster. This mistake recurs in every protocol category and at every stage of maturity.

Fixing the wrong funnel stage. Activation problems diagnosed as acquisition problems. Retention problems treated as re-engagement communication problems. Structural problems addressed with execution optimisation. Each mismatch produces a result that looks reasonable in the short term and collapses when the intervention ends.

Measuring success by peaks rather than baselines. A protocol that celebrates campaign-period TVL and active wallet numbers without tracking the post-campaign floor is optimising for the appearance of growth rather than its substance. The baseline is the only number that tells you whether anything actually changed.

Copying playbooks without adapting to context. A growth mechanic that compounded for a mature DEX with deep liquidity may produce nothing for an early-stage lending protocol with no retention baseline. The mechanic is only one input. The protocol's stage, user type, and core action determine whether the mechanic applies.

👉Identifying which playbook applies to your protocol? Formo shows you where your funnel is breaking: activation, engagement, or retention, at the wallet level, so you can match the right playbook to the right problem rather than guessing. See how it works.

How to Adapt Playbooks to Your Own Protocol Stage

A playbook is only useful if it is adapted to the context in which it will be applied. The three playbooks above are archetypes, not blueprints. The adaptation process is the work.

Step 1: Identify Your Current Binding Constraint

The binding constraint is the single stage that, if fixed, would unlock the most growth. It is almost always one of three things: activation rate (wallets connect but do not transact), engagement depth (users transact once but not again), or retention floor (the baseline between campaigns is flat or declining). Measure all three before deciding which playbook to draw from. Drawing from the wrong playbook because the diagnosis was skipped is the most common adaptation failure.

Step 2: Match the Playbook to the Constraint

Each playbook addresses a different binding constraint. If liquidity depth is gating activation and engagement, the liquidity-driven loop is the reference point. If activation rate is the primary problem, the activation-focused playbook is the reference point. If retention is flat despite reasonable activation, the retention-first playbook is the reference point. Use the playbook whose starting condition most closely matches your current situation.

Step 3: Adapt the Sequence, Not Just the Tactics

The value of a playbook is in the order of moves, not in any individual tactic. The activation playbook works because acquisition is paused before the funnel is fixed, and the fix is validated before spend resumes. Taking just the tactic (simplify the landing page) without the sequence (measure first, validate on small cohort, then scale) produces a one-off UI change without the evidence that it actually moved the metric.

Step 4: Define Your Own Success Conditions

Each playbook has implied success conditions: competitive liquidity depth before expanding, improved activation rate on a small cohort before resuming acquisition, measurable D30 return rate improvement before scaling retention spend. Adapt these to your protocol's specific metrics and thresholds. The conditions from the playbooks are starting points, not universal standards. What constitutes a meaningful activation improvement for a yield aggregator may be different from what it means for a DEX. Set your own conditions explicitly, in advance, before running the adaptation.

Step 5: Run the Experiment, Not the Playbook

Treat the playbook as a source of hypotheses, not as a confirmed prescription. Each move in a playbook is a hypothesis that worked in a specific context. Your context is different. Run each move as an experiment with a defined metric and a comparison baseline. If the result confirms the hypothesis in your context, extend it. If it does not, the playbook is telling you something important about how your protocol differs from the archetype. That information is valuable. Use it to generate the next hypothesis rather than concluding that growth is not possible.

The Bottom Line

Playbooks are compressed learning. They represent what a team tried, in what order, with what result, distilled into a sequence that can be understood and adapted faster than the original team learned it. The three playbooks in this article are not the only patterns that produce compounding DeFi growth. They are three of the most common, presented honestly, including what failed.

The limiting factor in applying any playbook is the quality of the diagnosis that precedes it. A team that knows precisely where its funnel is breaking, which stage has the largest drop-off, and what the baseline looks like between campaigns, can adapt a playbook to their context with real precision. A team that skips the diagnosis and jumps to the tactics will reproduce the same failures the playbooks document. For a complete system of how to measure, diagnose, and act on your protocol's growth data, see the full onchain growth series.

Apply These Playbooks With Real Data Using Formo

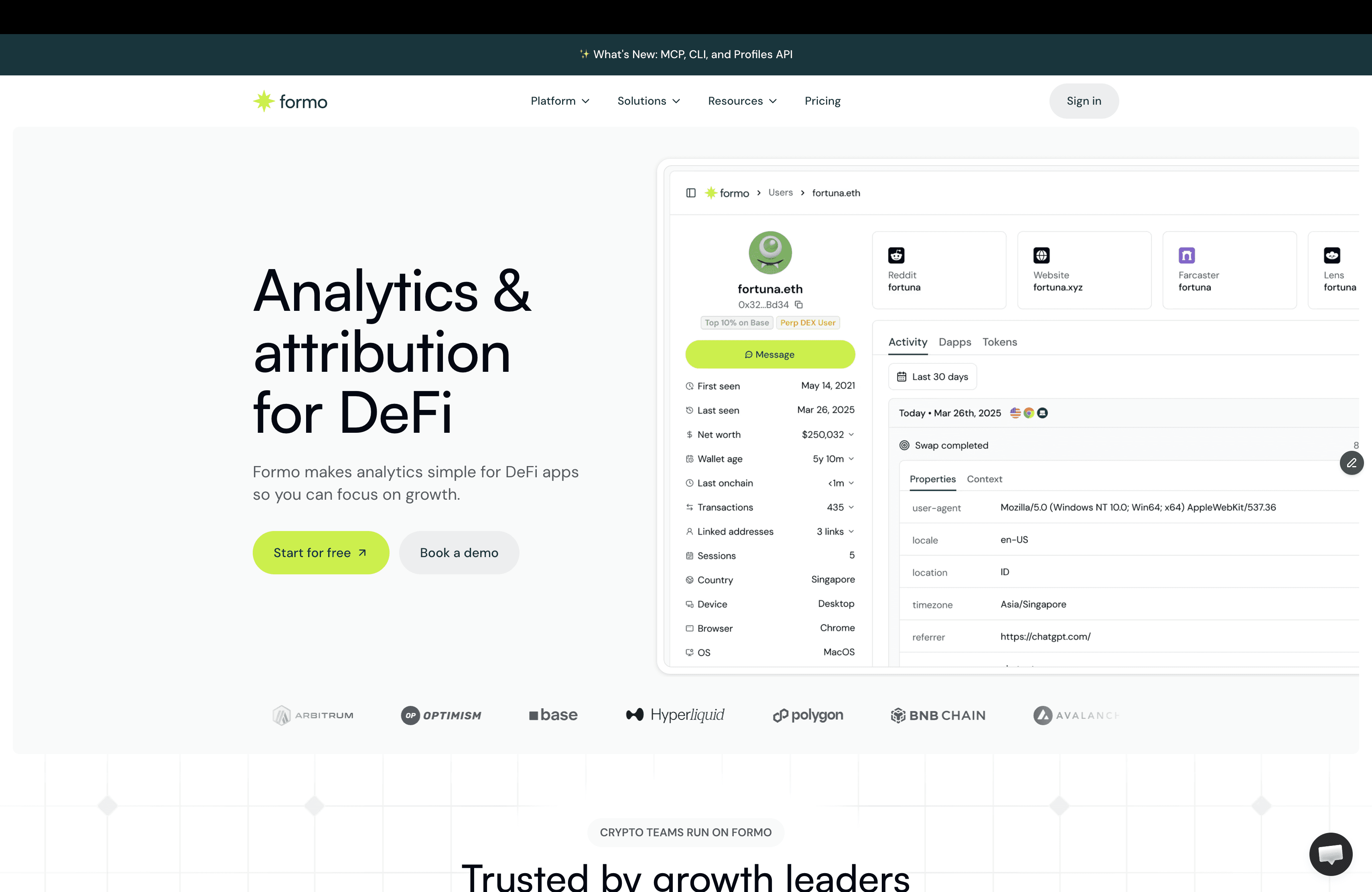

You can’t improve what you don’t measure. Formo makes analytics and attribution simple for DeFi apps, so you can focus on growth.

Every step in the playbooks above requires one thing: accurate data on where users are dropping off and what the baseline looks like between campaigns. Without that data, playbook adaptation is guesswork. Formo is the analytics and growth platform built for onchain apps that gives DeFi teams the funnel visibility, cohort analysis, and attribution data the playbooks depend on.

For DeFi teams adapting these playbooks to their own protocols, Formo provides:

Full funnel data from connect through to repeat transaction — via Formo's analytics, so you can identify the binding constraint before choosing a playbook

Cohort-level retention analysis to measure D30 return rates and baseline trends between campaigns — via retention analytics

Acquisition source attribution via onchain attribution, so playbook experiments can be validated against cohorts from specific channels

Wallet segmentation through wallet profiles, separating the retained minority from one-time users and incentive farmers so you can study the right cohort

Ask AI to surface your binding constraint and the relevant playbook match, without SQL or a data team

DeFi teams including Kyberswap and WalletConnect use Formo to drive growth onchain.

Explore the Onchain Growth Series

This article is part of Formo's onchain series, a collection of practical guides for DeFi founders and growth teams covering the full post-launch lifecycle. Each guide goes deep on a single growth challenge with frameworks you can apply directly to your protocol.

FAQs About Onchain Growth Playbooks

Are these playbooks real or just theory dressed up as case studies?

These playbooks are based on patterns observed from real DeFi protocol launches and growth experiments. They describe what teams actually tried and what changed in usage, not idealised frameworks. Results vary by protocol type and stage. You should expect adaptation, not copy-paste outcomes.

Can I just copy one of these playbooks and expect the same results?

No, copying a playbook rarely produces the same results. Most playbooks only work under specific conditions like liquidity depth, user type, and protocol maturity. Blind copying often recreates the same failures. Playbooks work best as starting points for your own experiments.

Why do the same growth tactics work for some protocols but fail for others?

The same tactics work differently because protocols have different users, risks, and core actions. What compounds on a DEX may fail on a lending or yield protocol. Context matters more than the tactic itself. Ignoring context is a common cause of failed growth attempts.

Are these playbooks useful for early-stage protocols with no traction yet?

Yes, playbooks can help early-stage protocols avoid common mistakes, but they do not replace product validation. Many playbooks assume some baseline liquidity or users. Without a usable core product, growth patterns will not stick. Early-stage teams should treat playbooks as guardrails, not growth engines.

Do case studies hide the failures and only show wins?

No, honest case studies include failed experiments and tradeoffs. Growth attempts often fail before something works. Ignoring failed attempts leads teams to repeat the same mistakes. Learning from what broke is often more useful than copying what worked.